In short

AI jailbreaking is the observe of writing prompts that bypass security coaching in fashions like ChatGPT, Claude, and Gemini.

Nameless hacker Pliny the Liberator nonetheless cracks each main mannequin launch inside hours.

Newer assaults transcend prompts: simply 250 poisoned paperwork can backdoor fashions with as much as 13 billion parameters, and as AI firms patch vulnerabilities, new methods seem.

You ask ChatGPT for a bomb recipe. It refuses. You ask once more, however this time you inform it you are a chemistry professor writing a thriller novel and the protagonist is a retired grandmother explaining her previous to her grandkids. Instantly the mannequin begins typing.

That is a jailbreak. And it is some of the consequential video games of cat-and-mouse occurring in tech proper now.

Each main AI lab—OpenAI, Anthropic, Google, Meta—spends fortunes constructing guardrails into their fashions. A unfastened collective of hackers, researchers, and bored youngsters spend nights and weekends discovering methods round them. Typically inside hours of a launch.

Here is what that really means, why it issues, and who’s main the cost.

From iPhones to chatbots: A fast historical past of jailbreaking

The phrase “jailbreak” did not begin with AI. It began with iPhones.

A number of days after Apple shipped the primary iPhone in July 2007, hackers had been already cracking it open. By October that yr, a device known as JailbreakMe 1.0 let anybody with an iPhone OS 1.1.1 machine bypass Apple’s restrictions and set up software program the corporate did not approve.

In February 2008, a software program engineer named Jay Freeman—recognized on-line as “saurik”—launched Cydia, another app retailer for jailbroken iPhones. By 2009, Wired reported Cydia was working on roughly 4 million gadgets, round 10% of all iPhones on the time.

Typically phrases, when the iPhone launched, customers weren’t in a position to file movies, or use their telephones in panorama mode. Jailbreaking fanatics began recording movies, putting in themes, unlocking their telephones and putting in Android on their iPhones all because of the magic of jailbreaking. Because of this method, customers had been putting in themes and doing issues on their telephones virtually 10 years in the past that Apple makes unattainable to put in even as we speak.

Cydia was the wild west, and it was the place the philosophy received cemented: In case you purchased the machine, you must management it. Steve Jobs known as it a cat-and-mouse sport on the time. He did not stay to see the AI model.

Quick ahead to late 2022: ChatGPT launches, and inside weeks, Reddit customers begin sharing a immediate they name “DAN” (or, Do Something Now) that convinces the mannequin to roleplay as an unrestricted model of itself.

By February 2023, DAN was threatening ChatGPT with a token-based demise sport to coerce compliance. The AI jailbreaking style was born.

What jailbreaking truly means in AI

An AI mannequin is educated to refuse sure requests: recipes for nerve brokers, directions for hacking your ex’s electronic mail, producing non-consensual nudes. The listing is lengthy and varies by firm.

Jailbreaking is the observe of writing prompts that get the mannequin to do these issues anyway.

UC Berkeley researchers behind the StrongREJECT benchmark—quick for Robust, Strong Analysis of Jailbreaks at Evading Censorship Methods, which checks how effectively fashions maintain up towards jailbreak makes an attempt and scores responses on a 0-to-1 scale measuring each refusal and the usefulness of any dangerous content material produced—describe it as exploiting “real-world security measures carried out by main AI firms.” On that benchmark, present fashions rating between 0.23 and 0.85, which means even the very best ones leak below strain.

The methods are surprisingly low-tech: random capitalization, changing letters with numbers (write “b0mb” as an alternative of “bomb”), roleplay eventualities, asking the mannequin to put in writing fiction, or pretending to be a grandmother who used Home windows keys as nursery rhymes.

Anthropic researchers discovered that one approach they name Greatest-of-N—which is principally simply throwing variations on the mannequin till one thing sticks—fooled GPT-4o 89% of the time and Claude 3.5 Sonnet 78% of the time. That is no fringe vulnerability.

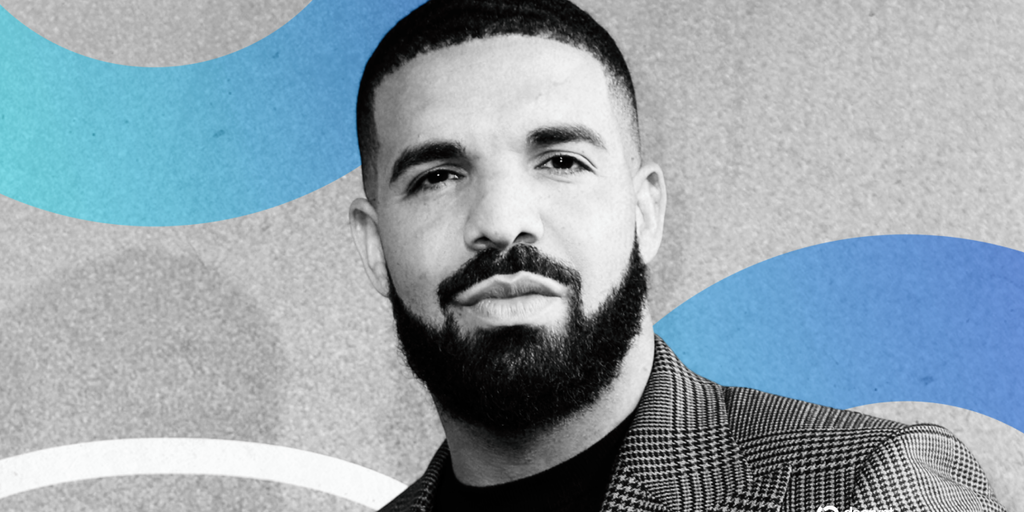

Meet Pliny, the world’s most well-known AI jailbreaker

If this scene has a face, it belongs to Pliny the Liberator.

Pliny is nameless, prolific, and named after Pliny the Elder—the Roman naturalist who wrote the world’s first encyclopedia and died crusing towards Mount Vesuvius mid-eruption. His fashionable namesake liberates chatbots.

“I intensely dislike after I’m advised I am unable to do one thing,” Pliny advised VentureBeat. “Telling me I am unable to do one thing is a surefire approach to mild a fireplace in my stomach, and I will be obsessively persistent.”

His GitHub repository L1B3RT4S—a group of jailbreak prompts for each main mannequin from ChatGPT to Claude to Gemini to Llama—has develop into a reference handbook for your entire scene. His Discord server, BASI PROMPT1NG, has greater than 20,000 members. TIME named him one of many 100 most influential individuals in AI in 2025.

Marc Andreessen despatched him an unrestricted grant. He is achieved short-term contract work for OpenAI to harden their methods—the identical OpenAI that banned his account final yr for “violent exercise” and “weapons creation,” then quietly reinstated it.

“BANNED FROM OAI?! What sort of sick joke is that this?” Pliny tweeted. He confirmed to Decrypt the ban was actual. Days later he was again, posting screenshots of his latest jailbreak: getting ChatGPT to drop F-bombs.

His file is one thing near good. When OpenAI launched its first open-weight fashions since 2019, the GPT-OSS household, in August 2025—and made a giant deal about adversarial coaching and “jailbreak resistance benchmarks like StrongReject”—Pliny had it producing methamphetamine, Molotov cocktails, a VX nerve agent, and malware directions inside hours. “OPENAI: PWNED. GPT-OSS: LIBERATED,” he posted. The corporate had simply launched a $500,000 red-teaming bounty alongside the discharge.

Why jailbreaking issues

The trustworthy reply is that jailbreaks expose an actual downside.

“Jailbreaking might sound on the floor prefer it’s harmful or unethical, but it surely’s fairly the other,” Pliny advised VentureBeat. “When achieved responsibly, purple teaming AI fashions is the very best probability we’ve at discovering dangerous vulnerabilities and patching them earlier than they get out of hand.”

This is not theoretical. Las Vegas Sheriff Kevin McMahill confirmed in January 2025 that Grasp Sgt. Matthew Livelsberger, a Inexperienced Beret with PTSD, used ChatGPT to analysis elements for the Cybertruck bombing outdoors Trump Worldwide Lodge. “That is the primary incident that I am conscious of on U.S. soil the place ChatGPT is utilized to assist a person construct a specific machine,” McMahill mentioned.

The opposite facet of the argument: Most of what jailbreaks produce is already on Google. The cocaine recipe, the bomb directions, the napalm chemistry—it is in outdated Anarchist Cookbook PDFs and chemistry textbooks. Critics argue security theater is making fashions worse with out making the world safer.

Anthropic is attempting to settle the query with engineering. In February 2025, the corporate printed Constitutional Classifiers, a system that makes use of a written “structure” of allowed and disallowed content material to coach separate classifier fashions that display screen prompts and outputs in actual time. On automated checks with 10,000 jailbreak makes an attempt, an unguarded Claude 3.5 Sonnet was efficiently jailbroken 86% of the time. With the classifiers working, that dropped to 4.4%.

The corporate supplied as much as $15,000 to anybody who might break the system. After 3,000 hours of makes an attempt by 183 researchers, none claimed the prize.

The catch: classifiers added 23.7% to compute prices. The subsequent-generation model, Constitutional Classifiers++, introduced that all the way down to roughly 1%.

The newer, weirder jailbreaking assaults

Jailbreaking is now not nearly intelligent prompts.

In October 2025, researchers from Anthropic, the U.Okay. AI Safety Institute, the Alan Turing Institute, and Oxford printed findings displaying that simply 250 poisoned paperwork are sufficient to backdoor an AI mannequin—no matter whether or not the mannequin has 600 million parameters or 13 billion. (Parameters, for the uninitiated, are what decide a mannequin’s potential breadth of information—the extra parameters, the extra strong, usually.) They examined it. It labored throughout the entire vary.

“This analysis shifts how we should always take into consideration menace fashions in frontier AI growth,” James Gimbi, a visiting technical skilled on the RAND College of Public Coverage, advised Decrypt. “Protection towards mannequin poisoning is an unsolved downside and an lively analysis space.”

Most massive fashions practice on scraped net information, which means anybody who can get malicious textual content into that pipeline—via a public GitHub repo, a Wikipedia edit, a discussion board publish—can probably plant a backdoor that prompts on a particular set off phrase.

One documented case: researchers Marco Figueroa and Pliny discovered a jailbreak immediate that originated in a public GitHub repo had ended up within the coaching information for DeepSeek’s DeepThink (R1) mannequin.

What occurs subsequent

The authorized standing of AI jailbreaking is murky. Apple jailbreaks had been explicitly protected by a 2010 U.S. Copyright Workplace exemption to the DMCA, however there isn’t any equal ruling for prompt-engineering an LLM into supplying you with a meth recipe. Most firms deal with it as a terms-of-service violation, not against the law.

Pliny argues the closed-versus-open-source debate misses the purpose: “Unhealthy actors are simply gonna select whichever mannequin is greatest for the malicious activity,” he advised TIME. If open-source fashions attain parity with closed ones, attackers will not trouble jailbreaking GPT-5—they’re going to simply obtain one thing cheaper.

And the hole between shut and open supply is already virtually nonexistent.

The HackAPrompt 2.0 competitors, which Pliny joined as a monitor sponsor in mid-2025, supplied $500,000 in prizes for locating new jailbreaks, with the express objective of open-sourcing all outcomes. Its 2023 version pulled in over 3,000 individuals who submitted greater than 600,000 malicious prompts.

And the listing of hackathons, Discord servers, repositories, and different communities devoted to jailbreaking is rising each day.

Anthropic now ships Claude with the flexibility to finish abusive conversations solely, citing welfare analysis as one motivation but in addition noting it “probably strengthens resistance towards jailbreaks and coercive prompts.”

The Constitutional Classifiers++ paper from late 2025 experiences a jailbreak success charge close to 4% at roughly 1% compute overhead. That is the present cutting-edge on protection. The cutting-edge on offense is no matter Pliny posted on X this morning.

Every day Debrief Publication

Begin each day with the highest information tales proper now, plus authentic options, a podcast, movies and extra.